| One Stop for Testing and Tools |

|

- Home

-

Testing Practices

- DevOps >

-

Azure

>

- Azure Introduction

- Types of Cloud Computing

- Containers vs. Virtual machines

- Basic Azure Infrastructure

- Content Delivery Network

- Subscription, Resource Group and Resources

- Virtual Machine

- Key Concepts

- Key Components

- Key Questions

- Service Fabric

- Azure Load Balancer : Azure Front Door vs. Application Gateway

-

Agile Testing

>

- Cloud Computing Testing >

-

Software Testing

-

Manual Testing

>

- Work Breakdown Structure (WBS)

- Three Point Estimation

- Use Case

-

Control level Test cases

>

- Page

- UI Cases Matrix

- Input Validations

- Tab

- Link

- Text Box / Text Area

- Checkbox

- Radio button

- Currency Control

- Rich Text Editor

- Button

- Grid

- Dropdown

- Calendar or Date

- Password

- Mega or Hamburger Menu

- Email Box

- Web Slider

- Image upload

- File Upload

- Accordion Widget

- Messages and pop up

- Graphical Reports

- Forms

- Result grid/Search result

- Search Box

- OTP

- SMS

- Progress Indicator

-

Test Design

>

- Test Plan

- Testing Strategy

- Effective Testing

- Pre QA Checklist

- Test Case Review

- Test Execution

- Compatibility Testing

- Browser Compatibility

- Localization and Internationalization

- Test Metrics

- Test Metrics Template

- Automation Testing >

-

Manual Testing

>

- Traditional Test

-

Tools

- LaunchPAD

- Basic Excel Formule

- PerlClip

- OM

- Excel Column Compare

- File List Generator

- Dummy File Creator

- TXT Collector

- CSS Viewer

- VB Regular Expression Checker

- Snippy

- ScreenGrab

- Fresh HTML Creator

- Test Data Generator

- SQL Script Generator

- Combinations and Permutations

- Non Transactional Test Cases

- Navigational Steps Template

- Project Directory Strcuture

- Visio Viewer

- File Conversion tool

- Differencing and Merging tool

- Large Text File Viewer

- Text File Splitter

- Replace Text Generator

- Testing Templates

- Cookie

Azure Service Fabric

Azure Service Fabric is a distributed systems platform that makes it easy to package, deploy, and manage scalable and reliable microservices and containers.

Why Service Fabric?

The intent of Azure Service Fabric is to provide developers with a very rich platform that addresses many of the complexities that are typically associated with building cloud-based distributed applications.

Service Fabric is Microsoft's container orchestrator for deploying and managing microservices across a cluster of machines. OR

Service Fabric is more of a computing and storage platform, which allows running heterogeneous workloads at scale in a cluster of servers.

Microservices in Azure

What are Microservices?

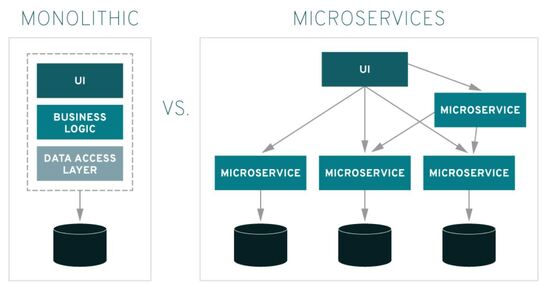

Microservices are an architectural style that develops a single application as a set of small services. Each service runs in its own process. The services communicate with clients, and often each other, using lightweight protocols, often over messaging or HTTP.

It is highly maintainable and testable. Loosely coupled. Independently deployable. so one team’s changes won’t break the entire app. The benefit to using microservices is that development teams are able to rapidly build new components of apps to meet changing business needs

Instead of a monolithic app, you have several independent applications that can run on their own. You can create them using different programming languages and even different platforms. You can structure big and complicated applications with simpler and independent programs that execute by themselves. These smaller programs are grouped to deliver all the functionalities of the big, monolithic app. Since your teams are working on smaller applications and more focused problem domains, their projects tend to be more agile, too. They can iterate faster, address new features on a shorter schedule, and turn around bug fixes almost immediately. They often find more opportunities to reuse code, also.

Azure Service Fabric is a distributed systems platform that makes it easy to package, deploy, and manage scalable and reliable microservices and containers.

Why Service Fabric?

The intent of Azure Service Fabric is to provide developers with a very rich platform that addresses many of the complexities that are typically associated with building cloud-based distributed applications.

Service Fabric is Microsoft's container orchestrator for deploying and managing microservices across a cluster of machines. OR

Service Fabric is more of a computing and storage platform, which allows running heterogeneous workloads at scale in a cluster of servers.

Microservices in Azure

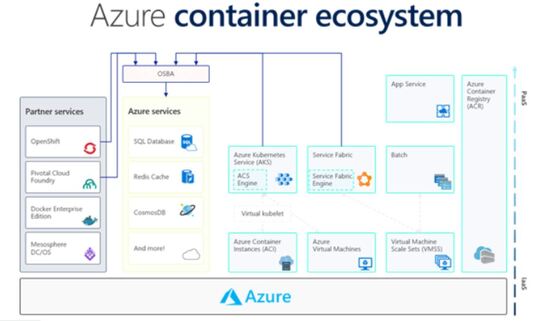

- Azure Container Service

Microsoft’s implementation of open-source container technologies based on Docker. - Azure Service Fabric

Allows to focus on business objectives and not infrastructure. - Azure Functions

Another viable option to build microservices, Allows you to write functions in a language of your choice directly on the Azure portal. Each function essentially become a mini version of a microservice.

What are Microservices?

Microservices are an architectural style that develops a single application as a set of small services. Each service runs in its own process. The services communicate with clients, and often each other, using lightweight protocols, often over messaging or HTTP.

It is highly maintainable and testable. Loosely coupled. Independently deployable. so one team’s changes won’t break the entire app. The benefit to using microservices is that development teams are able to rapidly build new components of apps to meet changing business needs

Instead of a monolithic app, you have several independent applications that can run on their own. You can create them using different programming languages and even different platforms. You can structure big and complicated applications with simpler and independent programs that execute by themselves. These smaller programs are grouped to deliver all the functionalities of the big, monolithic app. Since your teams are working on smaller applications and more focused problem domains, their projects tend to be more agile, too. They can iterate faster, address new features on a shorter schedule, and turn around bug fixes almost immediately. They often find more opportunities to reuse code, also.

What is API and Microservices?

Microservices are an architectural style for web applications, where the functionality is divided up across small web services. whereas. APIs are the frameworks through which developers can interact with a web application.

How do you test Microservices?

Typically, an application would be composed of a number of microservices, so in order to test in isolation, we need to mock the other microservices.

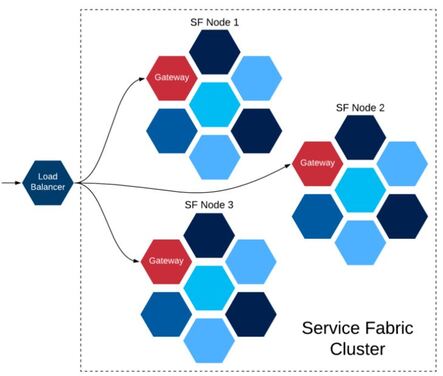

What is a service fabric cluster?

A Service Fabric cluster is a network-connected set of virtual or physical machines into which your microservices are deployed and managed. A machine or VM that is part of a cluster is called a cluster node.

Clusters can scale to thousands of nodes. If you add new nodes to the cluster, Service Fabric rebalances the service partition replicas and instances across the increased number of nodes. Overall application performance improves and contention for access to memory decreases. If the nodes in the cluster are not being used efficiently, you can decrease the number of nodes in the cluster. Service Fabric again rebalances the partition replicas and instances across the decreased number of nodes to make better use of the hardware on each node.

Typically in a Service Fabric cluster, there are several nodes (servers) contributing to its compute capacity and storage.

Microservices are an architectural style for web applications, where the functionality is divided up across small web services. whereas. APIs are the frameworks through which developers can interact with a web application.

How do you test Microservices?

Typically, an application would be composed of a number of microservices, so in order to test in isolation, we need to mock the other microservices.

What is a service fabric cluster?

A Service Fabric cluster is a network-connected set of virtual or physical machines into which your microservices are deployed and managed. A machine or VM that is part of a cluster is called a cluster node.

Clusters can scale to thousands of nodes. If you add new nodes to the cluster, Service Fabric rebalances the service partition replicas and instances across the increased number of nodes. Overall application performance improves and contention for access to memory decreases. If the nodes in the cluster are not being used efficiently, you can decrease the number of nodes in the cluster. Service Fabric again rebalances the partition replicas and instances across the decreased number of nodes to make better use of the hardware on each node.

Typically in a Service Fabric cluster, there are several nodes (servers) contributing to its compute capacity and storage.

What is the role of the Load Balancer?

Here the load balancer works outside the Service Fabric cluster. The load balancer is not Microservice aware, therefore it simply does the distribution of traffic across the nodes. The load balancer also does the health-checks for each node and if a node fails in the cluster, it knows not to forward any traffic towards it. We generally called it the external load balancer in Service Fabric.

If you are running Service Fabric Cluster in Microsoft Azure, you can use Azure Load Balancer for load balancing. If its on-prem Service Fabric setup, you will need to set up a load balancer with two servers. Two servers are used to avoid a single point of failure. A floating IP address needs to be assigned to these servers so that the other server could take over if one goes down (active-passive failover).

OR

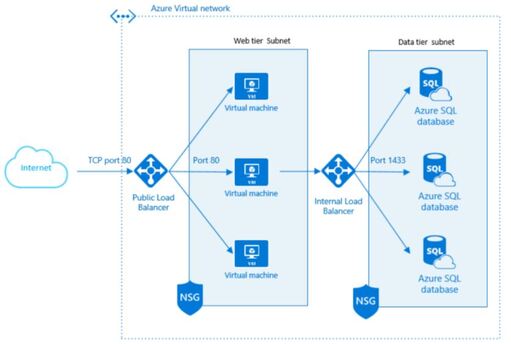

Azure Load balancer provides the distribution of Virtual Machine traffic to run your application smoothly in the production environment. All virtual machines connected to the backend of the Load balancer decide the traffic according to load on the per VM. Load balancer ensures the high availability of your application and its own fully managed service

An Azure load balancer is used to distribute traffic loads to backend virtual machines or virtual machine scale sets, by defining your own load balancing rules you can use a load balancer in a more flexible way.

Here the load balancer works outside the Service Fabric cluster. The load balancer is not Microservice aware, therefore it simply does the distribution of traffic across the nodes. The load balancer also does the health-checks for each node and if a node fails in the cluster, it knows not to forward any traffic towards it. We generally called it the external load balancer in Service Fabric.

If you are running Service Fabric Cluster in Microsoft Azure, you can use Azure Load Balancer for load balancing. If its on-prem Service Fabric setup, you will need to set up a load balancer with two servers. Two servers are used to avoid a single point of failure. A floating IP address needs to be assigned to these servers so that the other server could take over if one goes down (active-passive failover).

OR

Azure Load balancer provides the distribution of Virtual Machine traffic to run your application smoothly in the production environment. All virtual machines connected to the backend of the Load balancer decide the traffic according to load on the per VM. Load balancer ensures the high availability of your application and its own fully managed service

An Azure load balancer is used to distribute traffic loads to backend virtual machines or virtual machine scale sets, by defining your own load balancing rules you can use a load balancer in a more flexible way.

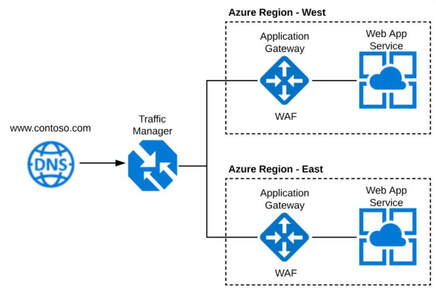

What is the Role of the Application Gateway?

Application Gateway (AGW) is a web traffic manager for your web applications (one or multiple) i.e. load balancer that enables you to manage traffic to your web applications

What is the difference between Azure Traffic Manager and Load Balancer?

Azure Traffic Manager has been designed to distribute traffic globally (Multiregional environments). Nevertheless, the Azure Load Balancer can only route traffic inside an Azure region, as it only works with Virtual Machines in the same region.

What is Azure Traffic Manager

The Azure Traffic Manager is a cloud-based load balancing service that allows you to control the distribution of user traffic for service endpoints in different datacenters. Traffic Manager is not a proxy or a gateway. Traffic Manager does not see the traffic passing between the client and the service; it uses DNS to direct traffic to the appropriate backend pool. Azure Traffic Manager operates at the DNS level as there is no concept of “IP Address” for Traffic Manager. In other words, the Traffic Manager is not aware of the IP address of the endpoint because it works with DNS CNAME records.

The main feature of Azure Traffic Manager is that it allows increased application performance, allowing you to run cloud services or websites in data centers located around the world. Traffic Manager improves application responsiveness by directing traffic to the endpoint with the lowest network latency for the client.

Application Gateway (AGW) is a web traffic manager for your web applications (one or multiple) i.e. load balancer that enables you to manage traffic to your web applications

What is the difference between Azure Traffic Manager and Load Balancer?

Azure Traffic Manager has been designed to distribute traffic globally (Multiregional environments). Nevertheless, the Azure Load Balancer can only route traffic inside an Azure region, as it only works with Virtual Machines in the same region.

What is Azure Traffic Manager

The Azure Traffic Manager is a cloud-based load balancing service that allows you to control the distribution of user traffic for service endpoints in different datacenters. Traffic Manager is not a proxy or a gateway. Traffic Manager does not see the traffic passing between the client and the service; it uses DNS to direct traffic to the appropriate backend pool. Azure Traffic Manager operates at the DNS level as there is no concept of “IP Address” for Traffic Manager. In other words, the Traffic Manager is not aware of the IP address of the endpoint because it works with DNS CNAME records.

The main feature of Azure Traffic Manager is that it allows increased application performance, allowing you to run cloud services or websites in data centers located around the world. Traffic Manager improves application responsiveness by directing traffic to the endpoint with the lowest network latency for the client.

What is containers in Azure?

A standard package of software—known as a container—bundles an application's code together with the related configuration files and libraries and with the dependencies required for the app to run. This allows developers and IT pros to deploy applications seamlessly across environments OR

Containers are a form of operating system virtualization. A single container might be used to run anything from a small microservice or software process to a larger application. Inside a container are all the necessary executables, binary code, libraries, and configuration files. Compared to server or machine virtualization approaches, however, containers do not contain operating system images. This makes them more lightweight and portable, with significantly less overhead.

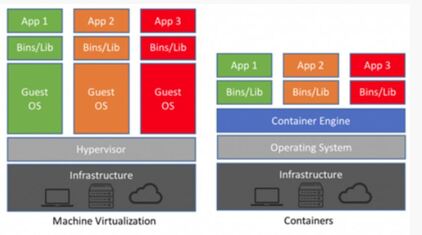

Whilst machine virtualisation operates at the hardware level and provides a way to run multiple instances of an operating system, containers on the other hand share the host operating system and run using isolated processes. The container engine allows each application (container) to run on top of the host operating system but remain isolated from each other.

A standard package of software—known as a container—bundles an application's code together with the related configuration files and libraries and with the dependencies required for the app to run. This allows developers and IT pros to deploy applications seamlessly across environments OR

Containers are a form of operating system virtualization. A single container might be used to run anything from a small microservice or software process to a larger application. Inside a container are all the necessary executables, binary code, libraries, and configuration files. Compared to server or machine virtualization approaches, however, containers do not contain operating system images. This makes them more lightweight and portable, with significantly less overhead.

Whilst machine virtualisation operates at the hardware level and provides a way to run multiple instances of an operating system, containers on the other hand share the host operating system and run using isolated processes. The container engine allows each application (container) to run on top of the host operating system but remain isolated from each other.

Running Containers in Azure

– Web app for containers

Web app for containers allows you to run your custom docker image in Azure App Service and take advantage of the managed platform service. This means you’re not required to patch or provision virtual machines and can utilise App Service features such as auto scaling, Azure active directory integration and custom domains.

When to use:

– Azure Kubernetes Service (AKS)

Kubernetes is an open source container and cluster management tool that is often referred to as an orchestration system. AKS is Microsoft’s managed service for Kubernetes that reduces the configuration overhead of the cluster and integrated features such as identity, networking and monitoring. Kubernetes is now regarded as the standard in container orchestration.

When to use:

– Azure Container instances (ACI)

ACI is a serverless offering, which means it’s billed on consumption rather than any pre-provisioned resources (virtual machines). It’s designed to be a simple and fast way to get started with containers and all underlying virtual machines are transparent, which means nothing to manage. ACI can also provide ‘virtual nodes’ to form the backbone of a serverless cluster within AKS.

When to use:

– Azure Service fabric

Azure Service fabric is a proprietary Microsoft stack that incorporates its own development framework, tooling, scaling and cluster management as a platform service. It can run guest executables as well as containers and was originally introduced to provide a platform for modernising windows .NET applications in Azure. With other services now supporting windows containers the relevance of Service Fabric to small- medium workloads is questionable.

When to use:

Web app for containers allows you to run your custom docker image in Azure App Service and take advantage of the managed platform service. This means you’re not required to patch or provision virtual machines and can utilise App Service features such as auto scaling, Azure active directory integration and custom domains.

When to use:

- If you need to take advantage of App Service features and run containers on a small scale.

– Azure Kubernetes Service (AKS)

Kubernetes is an open source container and cluster management tool that is often referred to as an orchestration system. AKS is Microsoft’s managed service for Kubernetes that reduces the configuration overhead of the cluster and integrated features such as identity, networking and monitoring. Kubernetes is now regarded as the standard in container orchestration.

When to use:

- if you’re looking to run containers at scale with flexible networking and customization options.

– Azure Container instances (ACI)

ACI is a serverless offering, which means it’s billed on consumption rather than any pre-provisioned resources (virtual machines). It’s designed to be a simple and fast way to get started with containers and all underlying virtual machines are transparent, which means nothing to manage. ACI can also provide ‘virtual nodes’ to form the backbone of a serverless cluster within AKS.

When to use:

- If you’re looking to get started with containers or have simple requirements (Dev/Test, Small web app etc) and only want to pay based on consumption.

– Azure Service fabric

Azure Service fabric is a proprietary Microsoft stack that incorporates its own development framework, tooling, scaling and cluster management as a platform service. It can run guest executables as well as containers and was originally introduced to provide a platform for modernising windows .NET applications in Azure. With other services now supporting windows containers the relevance of Service Fabric to small- medium workloads is questionable.

When to use:

- Complex and large-scale deployments that require a high demand of native functionality.

What is Docker image in Azure?

A Docker container image is a lightweight, standalone, executable package of software that includes everything needed to run an application: code, runtime, system tools, system libraries and settings.

This lab outlines the process to build custom Docker images of an ASP.NET Core application, push those images to a private repository in Azure Container Registry (ACR). These images will be used to deploy the application to the Docker containers in the Azure App Service (Linux) using Azure DevOps.

What is EndPoint in Azure?

Endpoints allow you to secure your critical Azure service resources to only your virtual networks. Service Endpoints enables private IP addresses in the VNet to reach the endpoint of an Azure service without needing a public IP address on the VNet

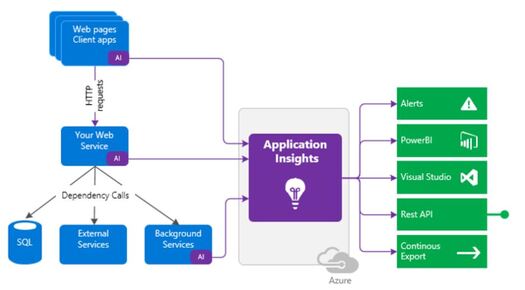

What is application Insights?

Monitoring your website performance is key to gaining insight into your customers and users, as well as keeping an eye on the website’s performance. Application Insights is an application performance management service for web applications that enables you to do all the monitoring of your website performance in Azure. It’s designed to ensure you’re getting optimal performance and the best in class user experience from your website. It also has a powerful analytic tool that helps you diagnose issues and gain an understanding of how people are using your web application.

Application Insights are mainly used to monitor the live web applications, automatically detecting the performance anomalies.

Application Insights Process of Implementation:

Install and set up an Insights resource in the Azure portal. The Insight resources monitor your app and send the data to the portal.

A Docker container image is a lightweight, standalone, executable package of software that includes everything needed to run an application: code, runtime, system tools, system libraries and settings.

This lab outlines the process to build custom Docker images of an ASP.NET Core application, push those images to a private repository in Azure Container Registry (ACR). These images will be used to deploy the application to the Docker containers in the Azure App Service (Linux) using Azure DevOps.

What is EndPoint in Azure?

Endpoints allow you to secure your critical Azure service resources to only your virtual networks. Service Endpoints enables private IP addresses in the VNet to reach the endpoint of an Azure service without needing a public IP address on the VNet

What is application Insights?

Monitoring your website performance is key to gaining insight into your customers and users, as well as keeping an eye on the website’s performance. Application Insights is an application performance management service for web applications that enables you to do all the monitoring of your website performance in Azure. It’s designed to ensure you’re getting optimal performance and the best in class user experience from your website. It also has a powerful analytic tool that helps you diagnose issues and gain an understanding of how people are using your web application.

Application Insights are mainly used to monitor the live web applications, automatically detecting the performance anomalies.

Application Insights Process of Implementation:

Install and set up an Insights resource in the Azure portal. The Insight resources monitor your app and send the data to the portal.

What are the different Service Fabric Service Types?

There are two main types of services you can build with Service Fabric:

What is Container Orchestration?

Container orchestration is the automation of all aspects of coordinating and managing containers. Container orchestration is focused on managing the life cycle of containers and their dynamic environments.

Why Do We Need Container Orchestration?

Container orchestration is used to automate the following tasks at scale:

• Configuring and scheduling of containers

• Provisioning and deployments of containers

• Availability of containers

• The configuration of applications in terms of the containers that they run in

• Scaling of containers to equally balance application workloads across infrastructure

• Allocation of resources between containers

• Load balancing, traffic routing and service discovery of containers

• Health monitoring of containers

• Securing the interactions between containers.

There are two main types of services you can build with Service Fabric:

- Stateless Services - no state is maintained in the service. Longer term state is stored in an external database. This is your typical application/data layer approach to building services that you are already likely familiar with.

- Stateful Services - state is stored with the service. Allows for state to be persisted with out the need for an external database. Data is co-located with the code that is running the service.

What is Container Orchestration?

Container orchestration is the automation of all aspects of coordinating and managing containers. Container orchestration is focused on managing the life cycle of containers and their dynamic environments.

Why Do We Need Container Orchestration?

Container orchestration is used to automate the following tasks at scale:

• Configuring and scheduling of containers

• Provisioning and deployments of containers

• Availability of containers

• The configuration of applications in terms of the containers that they run in

• Scaling of containers to equally balance application workloads across infrastructure

• Allocation of resources between containers

• Load balancing, traffic routing and service discovery of containers

• Health monitoring of containers

• Securing the interactions between containers.